共计 31794 个字符,预计需要花费 80 分钟才能阅读完成。

一. 基础环境

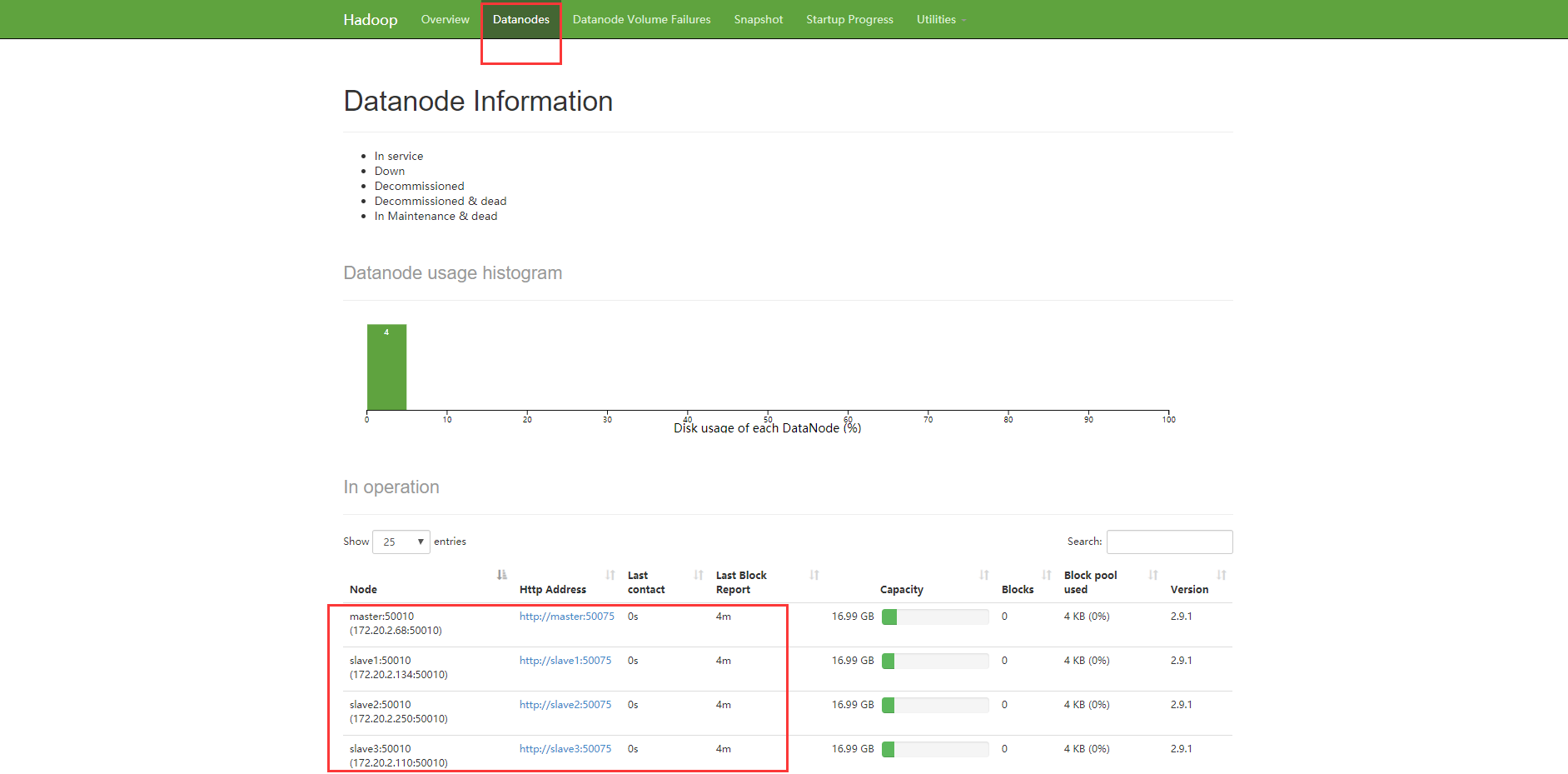

1、四台虚拟机的 IP 地址 及 主机名

172.20.2.68 master

172.20.2.134 slave1

172.20.2.250 slave2

172.20.2.110 slave32、HDFS角色分配:

| 节点Ip | 主机名 | HDFS角色 |

| 172.20.2.68 | master | datanode;namenode |

| 172.20.2.134 | slave1 | datanode; |

| 172.20.2.250 | slave2 | datanode;secondarynamenode |

| 172.20.2.110 | slave3 | datanode; |

3、YARN角色分配:

| 节点Ip | 主机名 | YARN角色 |

| 172.20.2.68 | master | nodemanager; |

| 172.20.2.134 | slave1 | nodemanager; |

| 172.20.2.250 | slave2 | nodemanager; |

| 172.20.2.110 | slave3 | nodemanager;resourcemanager |

二. 基础配置

1、关闭防火墙 [ 所有节点执行 ]

[root@master ~]# systemctl stop firewalld

[root@master ~]# systemctl disable firewalld

Removed symlink /etc/systemd/system/dbus-org.fedoraproject.FirewallD1.service.

Removed symlink /etc/systemd/system/basic.target.wants/firewalld.service.2、安装 JDK 及环境变量配置 [ 所有节点执行 ]

yum install java-1.8.0-openjdk.x86_64 java-1.8.0-openjdk-devel -y

cat >/etc/profile.d/java.sh<<EOF

export JAVA_HOME=/usr/lib/jvm/java-1.8.0-openjdk-1.8.0.191.b12-1.el7_6.x86_64

export CLASSPATH=.:\$JAVA_HOME/jre/lib/rt.jar:\$JAVA_HOME/lib/dt.jar:\$JAVA_HOME/lib/tools.jar

export PATH=\$PATH:\$JAVA_HOME/bin

EOF3、创建 hadoop 用户并设置密码 [ 所有节点执行 ]

[root@master ~]# useradd hadoop

[root@master ~]# echo "password" | passwd hadoop --stdin

Changing password for user hadoop.

passwd: all authentication tokens updated successfully.4、配置 hosts [ 所有节点执行 ]

[root@master ~]# cat /etc/hosts

127.0.0.1 localhost localhost.localdomain localhost4 localhost4.localdomain4

::1 localhost localhost.localdomain localhost6 localhost6.localdomain6

172.20.2.68 master

172.20.2.134 slave1

172.20.2.250 slave2

172.20.2.110 slave35、配置 SSH 免密登录 [ 所有节点执行 ]

[root@master ~]# su - hadoop

[hadoop@master ~]$ ssh-keygen -t rsa

Generating public/private rsa key pair.

Enter file in which to save the key (/home/hadoop/.ssh/id_rsa):

Created directory '/home/hadoop/.ssh'.

Enter passphrase (empty for no passphrase):

Enter same passphrase again:

Your identification has been saved in /home/hadoop/.ssh/id_rsa.

Your public key has been saved in /home/hadoop/.ssh/id_rsa.pub.

The key fingerprint is:

14:ea:1d:7b:61:3d:5b:42:52:dc:41:ad:39:e5:d1:a8 hadoop@master

The key's randomart image is:

+--[ RSA 2048]----+

| . .oooo+.|

| . . +. o.+|

| . o o +..=.|

| . o + .E=+ .|

| . S . . . |

| . |

| |

| |

| |

+-----------------+[hadoop@master ~]$ ssh-copy-id 172.20.2.68

[hadoop@master ~]$ ssh-copy-id 172.20.2.134

[hadoop@master ~]$ ssh-copy-id 172.20.2.250

[hadoop@master ~]$ ssh-copy-id 172.20.2.110三. 安装 Hadoop

3.1 解压安装包

1、下载 [ Master节点执行 ]

[hadoop@master ~]$ wget http://mirrors.hust.edu.cn/apache/hadoop/common/hadoop-2.9.1/hadoop-2.9.1.tar.gz2、使用hadoop用户,创建安装目录:/home/hadoop/apps,创建数据目录:/home/hadoop/data:[ Master节点执行 ]

[hadoop@master ~]$ pwd

/home/hadoop

[hadoop@master ~]$ mkdir apps

[hadoop@master ~]$ mkdir data

[hadoop@master ~]$ ls

apps data hadoop-2.9.1.tar.gz3、在apps文件夹下解压安装包:[ Master节点执行 ]

[hadoop@master ~]$ tar -zxf hadoop-2.9.1.tar.gz -C apps/

[hadoop@master ~]$ ll apps/

total 0

drwxr-xr-x. 9 hadoop hadoop 149 Apr 16 2018 hadoop-2.9.13.2 Master节点配置

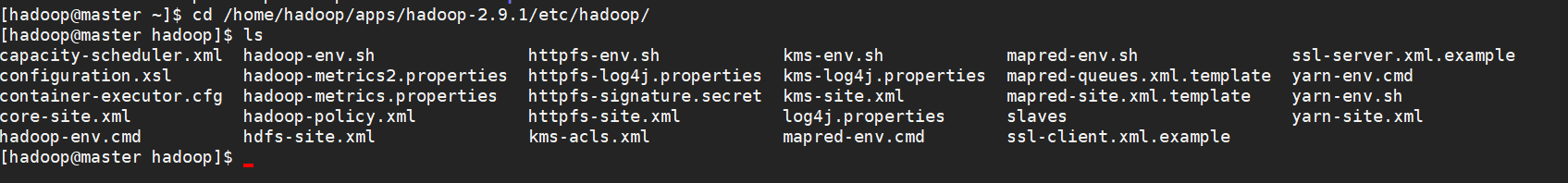

1、Master 节点配置

进入配置文件目录:/home/hadoop/apps/hadoop-2.9.1/etc/hadoop

2、配置core-site.xml

fs.defaultFS : 这个属性用来指定namenode的hdfs协议的文件系统通信地址,可以指定一个主机+端口,也可以指定为一个namenode服务(这个服务内部可以有多台namenode实现ha的namenode服务。

hadoop.tmp.dir : hadoop集群在工作的时候存储的一些临时文件的目录。

[hadoop@master hadoop]$ vim core-site.xml<configuration>

<property>

<name>fs.defaultFS</name>

<value>hdfs://master:9000</value>

</property>

<property>

<name>hadoop.tmp.dir</name>

<value>/home/hadoop/data/hadoopdata</value>

</property>

</configuration>3、配置hdfs-site.xml

dfs.namenode.name.dir:namenode数据的存放地点。也就是namenode元数据存放的地方,记录了hdfs系统中文件的元数据。

dfs.datanode.data.dir: datanode数据的存放地点。也就是block块存放的目录了。

dfs.replication:hdfs的副本数设置。也就是上传一个文件,其分割为block块后,每个block的冗余副本个数,默认配置是3。

dfs.secondary.http.address:secondarynamenode 运行节点的信息,和 namenode 不同节点

[hadoop@master hadoop]$ vim hdfs-site.xml<configuration>

<property>

<name>dfs.namenode.name.dir</name>

<value>/home/hadoop/data/hadoopdata/name</value>

<description>为了保证元数据的安全一般配置多个不同目录</description>

</property>

<property>

<name>dfs.datanode.data.dir</name>

<value>/home/hadoop/data/hadoopdata/data</value>

<description>datanode 的数据存储目录</description>

</property>

<property>

<name>dfs.replication</name>

<value>2</value>

<description>HDFS 的数据块的副本存储个数, 默认是3</description>

</property>

<property>

<name>dfs.secondary.http.address</name>

<value>slave2:50090</value>

<description>secondarynamenode 运行节点的信息,和 namenode 不同节点</description>

</property>

</configuration>4、配置mapred-site.xml

mapreduce.framework.name:指定mr框架为yarn方式,Hadoop二代MP也基于资源管理系统Yarn来运行 。

[hadoop@master hadoop]$ cp mapred-site.xml.template mapred-site.xml

[hadoop@master hadoop]$ vim mapred-site.xml5、配置yarn-site.xml

yarn.resourcemanager.hostname:yarn总管理器的IPC通讯地址

yarn.nodemanager.aux-services:YARN 集群为 MapReduce 程序提供的服务(常指定为 shuffle )

[hadoop@master hadoop]$ vim yarn-site.xml<configuration>

<!-- Site specific YARN configuration properties -->

<property>

<name>yarn.resourcemanager.hostname</name>

<value>slave3</value>

</property>

<property>

<name>yarn.nodemanager.aux-services</name>

<value>mapreduce_shuffle</value>

<description>YARN 集群为 MapReduce 程序提供的 shuffle 服务</description>

</property>

</configuration>6、配置 Slaves

[hadoop@master hadoop]$ vim slaves3.3 slave节点配置 [ 所有slave节点执行 ]

[v_error]每台服务器中的hadoop安装包的目录必须一致, 安装包的配置信息还必须保持一致[/v_error]

在slave1节点上,同样使用hadoop用户:

[hadoop@slave1 ~]$ mkdir apps在master节点上:

[hadoop@master hadoop]$ scp -r ~/apps/hadoop-2.9.1/ hadoop@slave1:~/apps/hadoop-2.9.1/

[hadoop@master hadoop]$ scp -r ~/apps/hadoop-2.9.1/ hadoop@slave2:~/apps/hadoop-2.9.1/

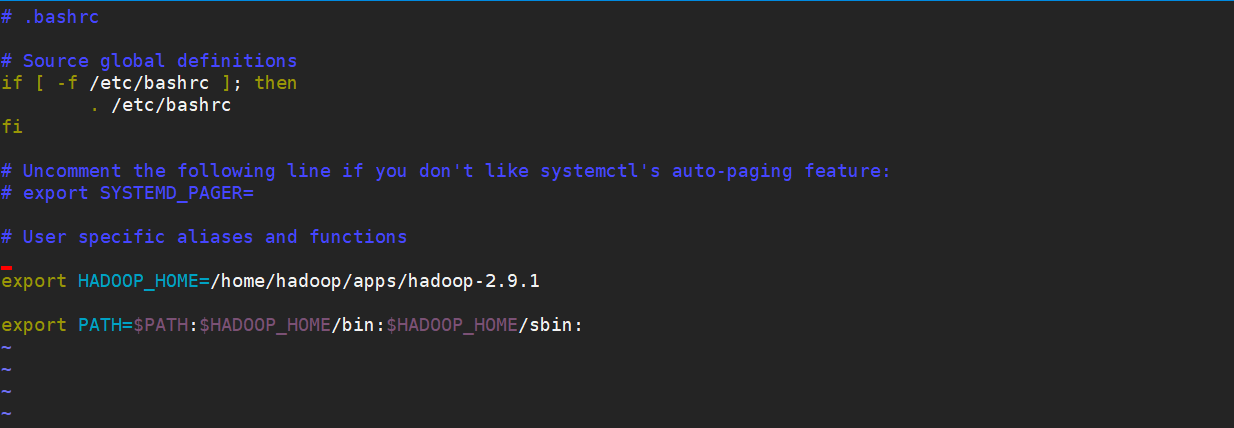

[hadoop@master hadoop]$ scp -r ~/apps/hadoop-2.9.1/ hadoop@slave3:~/apps/hadoop-2.9.1/3.4 Hadoop环境变量配置 [ 所有节点执行 ]

[v_error]

千万注意:

1、如果你使用root用户进行安装。 vi /etc/profile 即可 系统变量

2、如果你使用普通用户进行安装。 vi ~/.bashrc 用户变量(我是使用hadoop用户安装的)

[/v_error]

[hadoop@master ~]$ pwd

/home/hadoop

[hadoop@master ~]$ vim .bashrc# User specific aliases and functions

export HADOOP_HOME=/home/hadoop/apps/hadoop-2.9.1

export PATH=$PATH:$HADOOP_HOME/bin:$HADOOP_HOME/sbin:四 初始化 Hadoop [ Master节点执行 ]

[v_error]HDFS初始化只能在HDFS集群的主节点namenode上进行,本实验中即为master节点[/v_error]

[hadoop@master ~]$ hadoop namenode -format

DEPRECATED: Use of this script to execute hdfs command is deprecated.

Instead use the hdfs command for it.

18/12/12 17:41:34 INFO namenode.NameNode: STARTUP_MSG:

/************************************************************

STARTUP_MSG: Starting NameNode

STARTUP_MSG: host = master/172.20.2.68

STARTUP_MSG: args = [-format]

STARTUP_MSG: version = 2.9.1

STARTUP_MSG: classpath = /home/hadoop/apps/hadoop-2.9.1/etc/hadoop:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/common/lib/commons-collections-3.2.2.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/common/lib/jetty-6.1.26.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/common/lib/jetty-util-6.1.26.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/common/lib/jetty-sslengine-6.1.26.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/common/lib/jsp-api-2.1.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/common/lib/jersey-core-1.9.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/common/lib/jersey-json-1.9.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/common/lib/jettison-1.1.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/common/lib/jaxb-impl-2.2.3-1.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/common/lib/jaxb-api-2.2.2.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/common/lib/stax-api-1.0-2.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/common/lib/activation-1.1.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/common/lib/jackson-core-asl-1.9.13.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/common/lib/jackson-mapper-asl-1.9.13.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/common/lib/jackson-jaxrs-1.9.13.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/common/lib/jackson-xc-1.9.13.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/common/lib/jersey-server-1.9.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/common/lib/asm-3.2.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/common/lib/log4j-1.2.17.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/common/lib/jets3t-0.9.0.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/common/lib/java-xmlbuilder-0.4.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/common/lib/commons-lang-2.6.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/common/lib/commons-configuration-1.6.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/common/lib/commons-digester-1.8.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/common/lib/commons-beanutils-1.7.0.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/common/lib/commons-beanutils-core-1.8.0.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/common/lib/commons-lang3-3.4.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/common/lib/slf4j-api-1.7.25.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/common/lib/slf4j-log4j12-1.7.25.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/common/lib/avro-1.7.7.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/common/lib/paranamer-2.3.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/common/lib/snappy-java-1.0.5.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/common/lib/commons-compress-1.4.1.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/common/lib/xz-1.0.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/common/lib/protobuf-java-2.5.0.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/common/lib/gson-2.2.4.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/common/lib/hadoop-auth-2.9.1.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/common/lib/nimbus-jose-jwt-4.41.1.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/common/lib/jcip-annotations-1.0-1.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/common/lib/json-smart-1.3.1.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/common/lib/apacheds-kerberos-codec-2.0.0-M15.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/common/lib/apacheds-i18n-2.0.0-M15.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/common/lib/api-asn1-api-1.0.0-M20.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/common/lib/api-util-1.0.0-M20.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/common/lib/zookeeper-3.4.6.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/common/lib/netty-3.6.2.Final.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/common/lib/curator-framework-2.7.1.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/common/lib/curator-client-2.7.1.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/common/lib/jsch-0.1.54.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/common/lib/curator-recipes-2.7.1.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/common/lib/htrace-core4-4.1.0-incubating.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/common/lib/stax2-api-3.1.4.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/common/lib/woodstox-core-5.0.3.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/common/lib/junit-4.11.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/common/lib/hamcrest-core-1.3.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/common/lib/mockito-all-1.8.5.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/common/lib/hadoop-annotations-2.9.1.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/common/lib/guava-11.0.2.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/common/lib/jsr305-3.0.0.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/common/lib/commons-cli-1.2.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/common/lib/commons-math3-3.1.1.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/common/lib/xmlenc-0.52.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/common/lib/httpclient-4.5.2.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/common/lib/httpcore-4.4.4.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/common/lib/commons-logging-1.1.3.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/common/lib/commons-codec-1.4.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/common/lib/commons-io-2.4.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/common/lib/commons-net-3.1.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/common/lib/servlet-api-2.5.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/common/hadoop-common-2.9.1.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/common/hadoop-common-2.9.1-tests.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/common/hadoop-nfs-2.9.1.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/hdfs:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/hdfs/lib/commons-codec-1.4.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/hdfs/lib/log4j-1.2.17.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/hdfs/lib/commons-logging-1.1.3.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/hdfs/lib/netty-3.6.2.Final.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/hdfs/lib/guava-11.0.2.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/hdfs/lib/jsr305-3.0.0.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/hdfs/lib/commons-cli-1.2.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/hdfs/lib/xmlenc-0.52.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/hdfs/lib/commons-io-2.4.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/hdfs/lib/servlet-api-2.5.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/hdfs/lib/jetty-6.1.26.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/hdfs/lib/jetty-util-6.1.26.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/hdfs/lib/jersey-core-1.9.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/hdfs/lib/jackson-core-asl-1.9.13.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/hdfs/lib/jackson-mapper-asl-1.9.13.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/hdfs/lib/jersey-server-1.9.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/hdfs/lib/asm-3.2.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/hdfs/lib/commons-lang-2.6.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/hdfs/lib/protobuf-java-2.5.0.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/hdfs/lib/htrace-core4-4.1.0-incubating.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/hdfs/lib/hadoop-hdfs-client-2.9.1.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/hdfs/lib/okhttp-2.7.5.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/hdfs/lib/okio-1.6.0.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/hdfs/lib/commons-daemon-1.0.13.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/hdfs/lib/netty-all-4.0.23.Final.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/hdfs/lib/xercesImpl-2.9.1.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/hdfs/lib/xml-apis-1.3.04.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/hdfs/lib/leveldbjni-all-1.8.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/hdfs/lib/jackson-databind-2.7.8.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/hdfs/lib/jackson-annotations-2.7.8.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/hdfs/lib/jackson-core-2.7.8.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/hdfs/hadoop-hdfs-2.9.1.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/hdfs/hadoop-hdfs-2.9.1-tests.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/hdfs/hadoop-hdfs-nfs-2.9.1.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/hdfs/hadoop-hdfs-client-2.9.1.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/hdfs/hadoop-hdfs-client-2.9.1-tests.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/hdfs/hadoop-hdfs-native-client-2.9.1.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/hdfs/hadoop-hdfs-native-client-2.9.1-tests.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/hdfs/hadoop-hdfs-rbf-2.9.1.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/hdfs/hadoop-hdfs-rbf-2.9.1-tests.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/yarn:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/yarn/lib/commons-cli-1.2.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/yarn/lib/log4j-1.2.17.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/yarn/lib/commons-compress-1.4.1.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/yarn/lib/xz-1.0.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/yarn/lib/servlet-api-2.5.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/yarn/lib/commons-codec-1.4.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/yarn/lib/jetty-util-6.1.26.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/yarn/lib/jersey-core-1.9.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/yarn/lib/jersey-client-1.9.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/yarn/lib/jackson-core-asl-1.9.13.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/yarn/lib/jackson-mapper-asl-1.9.13.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/yarn/lib/jackson-jaxrs-1.9.13.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/yarn/lib/jackson-xc-1.9.13.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/yarn/lib/guice-servlet-3.0.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/yarn/lib/guice-3.0.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/yarn/lib/javax.inject-1.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/yarn/lib/aopalliance-1.0.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/yarn/lib/commons-io-2.4.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/yarn/lib/jersey-server-1.9.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/yarn/lib/asm-3.2.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/yarn/lib/jersey-json-1.9.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/yarn/lib/jettison-1.1.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/yarn/lib/jaxb-impl-2.2.3-1.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/yarn/lib/jersey-guice-1.9.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/yarn/lib/commons-math3-3.1.1.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/yarn/lib/xmlenc-0.52.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/yarn/lib/httpclient-4.5.2.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/yarn/lib/httpcore-4.4.4.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/yarn/lib/commons-net-3.1.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/yarn/lib/commons-collections-3.2.2.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/yarn/lib/jetty-6.1.26.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/yarn/lib/jetty-sslengine-6.1.26.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/yarn/lib/jsp-api-2.1.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/yarn/lib/jets3t-0.9.0.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/yarn/lib/java-xmlbuilder-0.4.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/yarn/lib/commons-configuration-1.6.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/yarn/lib/commons-digester-1.8.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/yarn/lib/commons-beanutils-1.7.0.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/yarn/lib/commons-beanutils-core-1.8.0.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/yarn/lib/commons-lang3-3.4.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/yarn/lib/avro-1.7.7.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/yarn/lib/paranamer-2.3.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/yarn/lib/snappy-java-1.0.5.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/yarn/lib/gson-2.2.4.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/yarn/lib/nimbus-jose-jwt-4.41.1.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/yarn/lib/jcip-annotations-1.0-1.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/yarn/lib/json-smart-1.3.1.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/yarn/lib/apacheds-kerberos-codec-2.0.0-M15.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/yarn/lib/apacheds-i18n-2.0.0-M15.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/yarn/lib/api-asn1-api-1.0.0-M20.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/yarn/lib/api-util-1.0.0-M20.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/yarn/lib/zookeeper-3.4.6.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/yarn/lib/netty-3.6.2.Final.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/yarn/lib/curator-framework-2.7.1.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/yarn/lib/curator-client-2.7.1.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/yarn/lib/jsch-0.1.54.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/yarn/lib/curator-recipes-2.7.1.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/yarn/lib/htrace-core4-4.1.0-incubating.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/yarn/lib/stax2-api-3.1.4.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/yarn/lib/woodstox-core-5.0.3.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/yarn/lib/leveldbjni-all-1.8.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/yarn/lib/geronimo-jcache_1.0_spec-1.0-alpha-1.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/yarn/lib/ehcache-3.3.1.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/yarn/lib/HikariCP-java7-2.4.12.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/yarn/lib/mssql-jdbc-6.2.1.jre7.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/yarn/lib/metrics-core-3.0.1.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/yarn/lib/fst-2.50.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/yarn/lib/java-util-1.9.0.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/yarn/lib/json-io-2.5.1.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/yarn/lib/commons-lang-2.6.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/yarn/lib/guava-11.0.2.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/yarn/lib/jsr305-3.0.0.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/yarn/lib/commons-logging-1.1.3.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/yarn/lib/jaxb-api-2.2.2.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/yarn/lib/stax-api-1.0-2.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/yarn/lib/activation-1.1.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/yarn/lib/protobuf-java-2.5.0.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/yarn/hadoop-yarn-api-2.9.1.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/yarn/hadoop-yarn-common-2.9.1.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/yarn/hadoop-yarn-registry-2.9.1.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/yarn/hadoop-yarn-server-common-2.9.1.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/yarn/hadoop-yarn-server-nodemanager-2.9.1.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/yarn/hadoop-yarn-server-web-proxy-2.9.1.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/yarn/hadoop-yarn-server-applicationhistoryservice-2.9.1.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/yarn/hadoop-yarn-server-resourcemanager-2.9.1.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/yarn/hadoop-yarn-server-tests-2.9.1.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/yarn/hadoop-yarn-client-2.9.1.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/yarn/hadoop-yarn-server-sharedcachemanager-2.9.1.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/yarn/hadoop-yarn-server-timeline-pluginstorage-2.9.1.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/yarn/hadoop-yarn-server-router-2.9.1.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/yarn/hadoop-yarn-applications-distributedshell-2.9.1.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/yarn/hadoop-yarn-applications-unmanaged-am-launcher-2.9.1.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/mapreduce/lib/protobuf-java-2.5.0.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/mapreduce/lib/avro-1.7.7.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/mapreduce/lib/jackson-core-asl-1.9.13.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/mapreduce/lib/jackson-mapper-asl-1.9.13.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/mapreduce/lib/paranamer-2.3.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/mapreduce/lib/snappy-java-1.0.5.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/mapreduce/lib/commons-compress-1.4.1.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/mapreduce/lib/xz-1.0.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/mapreduce/lib/hadoop-annotations-2.9.1.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/mapreduce/lib/commons-io-2.4.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/mapreduce/lib/jersey-core-1.9.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/mapreduce/lib/jersey-server-1.9.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/mapreduce/lib/asm-3.2.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/mapreduce/lib/log4j-1.2.17.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/mapreduce/lib/netty-3.6.2.Final.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/mapreduce/lib/leveldbjni-all-1.8.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/mapreduce/lib/guice-3.0.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/mapreduce/lib/javax.inject-1.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/mapreduce/lib/aopalliance-1.0.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/mapreduce/lib/jersey-guice-1.9.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/mapreduce/lib/guice-servlet-3.0.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/mapreduce/lib/junit-4.11.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/mapreduce/lib/hamcrest-core-1.3.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/mapreduce/hadoop-mapreduce-client-core-2.9.1.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/mapreduce/hadoop-mapreduce-client-common-2.9.1.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/mapreduce/hadoop-mapreduce-client-shuffle-2.9.1.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/mapreduce/hadoop-mapreduce-client-app-2.9.1.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/mapreduce/hadoop-mapreduce-client-hs-2.9.1.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/mapreduce/hadoop-mapreduce-client-jobclient-2.9.1.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/mapreduce/hadoop-mapreduce-client-hs-plugins-2.9.1.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/mapreduce/hadoop-mapreduce-examples-2.9.1.jar:/home/hadoop/apps/hadoop-2.9.1/share/hadoop/mapreduce/hadoop-mapreduce-client-jobclient-2.9.1-tests.jar:/home/hadoop/apps/hadoop-2.9.1/contrib/capacity-scheduler/*.jar:/home/hadoop/apps/hadoop-2.9.1/contrib/capacity-scheduler/*.jar

STARTUP_MSG: build = https://github.com/apache/hadoop.git -r e30710aea4e6e55e69372929106cf119af06fd0e; compiled by 'root' on 2018-04-16T09:33Z

STARTUP_MSG: java = 1.8.0_191

************************************************************/

18/12/12 17:41:34 INFO namenode.NameNode: registered UNIX signal handlers for [TERM, HUP, INT]

18/12/12 17:41:34 INFO namenode.NameNode: createNameNode [-format]

18/12/12 17:41:35 WARN common.Util: Path /home/hadoop/data/hadoopdata/name should be specified as a URI in configuration files. Please update hdfs configuration.

18/12/12 17:41:35 WARN common.Util: Path /home/hadoop/data/hadoopdata/name should be specified as a URI in configuration files. Please update hdfs configuration.

Formatting using clusterid: CID-ff72e1be-35d7-446a-ad40-8a4418fd5ca6

18/12/12 17:41:35 INFO namenode.FSEditLog: Edit logging is async:true

18/12/12 17:41:35 INFO namenode.FSNamesystem: KeyProvider: null

18/12/12 17:41:35 INFO namenode.FSNamesystem: fsLock is fair: true

18/12/12 17:41:35 INFO namenode.FSNamesystem: Detailed lock hold time metrics enabled: false

18/12/12 17:41:35 INFO namenode.FSNamesystem: fsOwner = hadoop (auth:SIMPLE)

18/12/12 17:41:35 INFO namenode.FSNamesystem: supergroup = supergroup

18/12/12 17:41:35 INFO namenode.FSNamesystem: isPermissionEnabled = true

18/12/12 17:41:35 INFO namenode.FSNamesystem: HA Enabled: false

18/12/12 17:41:35 INFO common.Util: dfs.datanode.fileio.profiling.sampling.percentage set to 0. Disabling file IO profiling

18/12/12 17:41:35 INFO blockmanagement.DatanodeManager: dfs.block.invalidate.limit: configured=1000, counted=60, effected=1000

18/12/12 17:41:35 INFO blockmanagement.DatanodeManager: dfs.namenode.datanode.registration.ip-hostname-check=true

18/12/12 17:41:35 INFO blockmanagement.BlockManager: dfs.namenode.startup.delay.block.deletion.sec is set to 000:00:00:00.000

18/12/12 17:41:35 INFO blockmanagement.BlockManager: The block deletion will start around 2018 Dec 12 17:41:35

18/12/12 17:41:35 INFO util.GSet: Computing capacity for map BlocksMap

18/12/12 17:41:35 INFO util.GSet: VM type = 64-bit

18/12/12 17:41:35 INFO util.GSet: 2.0% max memory 889 MB = 17.8 MB

18/12/12 17:41:35 INFO util.GSet: capacity = 2^21 = 2097152 entries

18/12/12 17:41:35 INFO blockmanagement.BlockManager: dfs.block.access.token.enable=false

18/12/12 17:41:35 WARN conf.Configuration: No unit for dfs.heartbeat.interval(3) assuming SECONDS

18/12/12 17:41:35 WARN conf.Configuration: No unit for dfs.namenode.safemode.extension(30000) assuming MILLISECONDS

18/12/12 17:41:35 INFO blockmanagement.BlockManagerSafeMode: dfs.namenode.safemode.threshold-pct = 0.9990000128746033

18/12/12 17:41:35 INFO blockmanagement.BlockManagerSafeMode: dfs.namenode.safemode.min.datanodes = 0

18/12/12 17:41:35 INFO blockmanagement.BlockManagerSafeMode: dfs.namenode.safemode.extension = 30000

18/12/12 17:41:35 INFO blockmanagement.BlockManager: defaultReplication = 2

18/12/12 17:41:35 INFO blockmanagement.BlockManager: maxReplication = 512

18/12/12 17:41:35 INFO blockmanagement.BlockManager: minReplication = 1

18/12/12 17:41:35 INFO blockmanagement.BlockManager: maxReplicationStreams = 2

18/12/12 17:41:35 INFO blockmanagement.BlockManager: replicationRecheckInterval = 3000

18/12/12 17:41:35 INFO blockmanagement.BlockManager: encryptDataTransfer = false

18/12/12 17:41:35 INFO blockmanagement.BlockManager: maxNumBlocksToLog = 1000

18/12/12 17:41:35 INFO namenode.FSNamesystem: Append Enabled: true

18/12/12 17:41:35 INFO util.GSet: Computing capacity for map INodeMap

18/12/12 17:41:35 INFO util.GSet: VM type = 64-bit

18/12/12 17:41:35 INFO util.GSet: 1.0% max memory 889 MB = 8.9 MB

18/12/12 17:41:35 INFO util.GSet: capacity = 2^20 = 1048576 entries

18/12/12 17:41:35 INFO namenode.FSDirectory: ACLs enabled? false

18/12/12 17:41:35 INFO namenode.FSDirectory: XAttrs enabled? true

18/12/12 17:41:35 INFO namenode.NameNode: Caching file names occurring more than 10 times

18/12/12 17:41:35 INFO snapshot.SnapshotManager: Loaded config captureOpenFiles: falseskipCaptureAccessTimeOnlyChange: false

18/12/12 17:41:35 INFO util.GSet: Computing capacity for map cachedBlocks

18/12/12 17:41:35 INFO util.GSet: VM type = 64-bit

18/12/12 17:41:35 INFO util.GSet: 0.25% max memory 889 MB = 2.2 MB

18/12/12 17:41:35 INFO util.GSet: capacity = 2^18 = 262144 entries

18/12/12 17:41:35 INFO metrics.TopMetrics: NNTop conf: dfs.namenode.top.window.num.buckets = 10

18/12/12 17:41:35 INFO metrics.TopMetrics: NNTop conf: dfs.namenode.top.num.users = 10

18/12/12 17:41:35 INFO metrics.TopMetrics: NNTop conf: dfs.namenode.top.windows.minutes = 1,5,25

18/12/12 17:41:35 INFO namenode.FSNamesystem: Retry cache on namenode is enabled

18/12/12 17:41:35 INFO namenode.FSNamesystem: Retry cache will use 0.03 of total heap and retry cache entry expiry time is 600000 millis

18/12/12 17:41:35 INFO util.GSet: Computing capacity for map NameNodeRetryCache

18/12/12 17:41:35 INFO util.GSet: VM type = 64-bit

18/12/12 17:41:35 INFO util.GSet: 0.029999999329447746% max memory 889 MB = 273.1 KB

18/12/12 17:41:35 INFO util.GSet: capacity = 2^15 = 32768 entries

18/12/12 17:41:35 INFO namenode.FSImage: Allocated new BlockPoolId: BP-1184452977-172.20.2.68-1544607695499

18/12/12 17:41:35 INFO common.Storage: Storage directory /home/hadoop/data/hadoopdata/name has been successfully formatted.

18/12/12 17:41:35 INFO namenode.FSImageFormatProtobuf: Saving image file /home/hadoop/data/hadoopdata/name/current/fsimage.ckpt_0000000000000000000 using no compression

18/12/12 17:41:35 INFO namenode.FSImageFormatProtobuf: Image file /home/hadoop/data/hadoopdata/name/current/fsimage.ckpt_0000000000000000000 of size 323 bytes saved in 0 seconds .

18/12/12 17:41:35 INFO namenode.NNStorageRetentionManager: Going to retain 1 images with txid >= 0

18/12/12 17:41:35 INFO namenode.NameNode: SHUTDOWN_MSG:

/************************************************************

SHUTDOWN_MSG: Shutting down NameNode at master/172.20.2.68

************************************************************/五 启动 Hadoop

5.1 启动HDFS(HDFS集群的任何节点都可以)

[hadoop@master ~]$ start-dfs.sh

Starting namenodes on [master]

The authenticity of host 'master (172.20.2.68)' can't be established.

ECDSA key fingerprint is 81:c3:e3:15:8e:6e:65:d8:e8:0c:c0:c8:0b:e7:e5:07.

Are you sure you want to continue connecting (yes/no)? yes

master: Warning: Permanently added 'master' (ECDSA) to the list of known hosts.

master: starting namenode, logging to /home/hadoop/apps/hadoop-2.9.1/logs/hadoop-hadoop-namenode-master.out

master: starting datanode, logging to /home/hadoop/apps/hadoop-2.9.1/logs/hadoop-hadoop-datanode-master.out

slave2: starting datanode, logging to /home/hadoop/apps/hadoop-2.9.1/logs/hadoop-hadoop-datanode-slave2.out

slave1: starting datanode, logging to /home/hadoop/apps/hadoop-2.9.1/logs/hadoop-hadoop-datanode-slave1.out

slave3: starting datanode, logging to /home/hadoop/apps/hadoop-2.9.1/logs/hadoop-hadoop-datanode-slave3.out

Starting secondary namenodes [slave2]

slave2: starting secondarynamenode, logging to /home/hadoop/apps/hadoop-2.9.1/logs/hadoop-hadoop-secondarynamenode-slave2.out5.2 启动YARN(在YARN主节点ResourceManager上执行)

[v_error]只能在YARN的主节点resourcemanager中进行启动,也就是本集群的slave3。[/v_error]

[hadoop@slave3 ~]$ start-yarn.sh

starting yarn daemons

starting resourcemanager, logging to /home/hadoop/apps/hadoop-2.9.1/logs/yarn-hadoop-resourcemanager-slave3.out

slave2: starting nodemanager, logging to /home/hadoop/apps/hadoop-2.9.1/logs/yarn-hadoop-nodemanager-slave2.out

master: starting nodemanager, logging to /home/hadoop/apps/hadoop-2.9.1/logs/yarn-hadoop-nodemanager-master.out

slave1: starting nodemanager, logging to /home/hadoop/apps/hadoop-2.9.1/logs/yarn-hadoop-nodemanager-slave1.out

slave3: starting nodemanager, logging to /home/hadoop/apps/hadoop-2.9.1/logs/yarn-hadoop-nodemanager-slave3.out六 查看4台服务器的进程

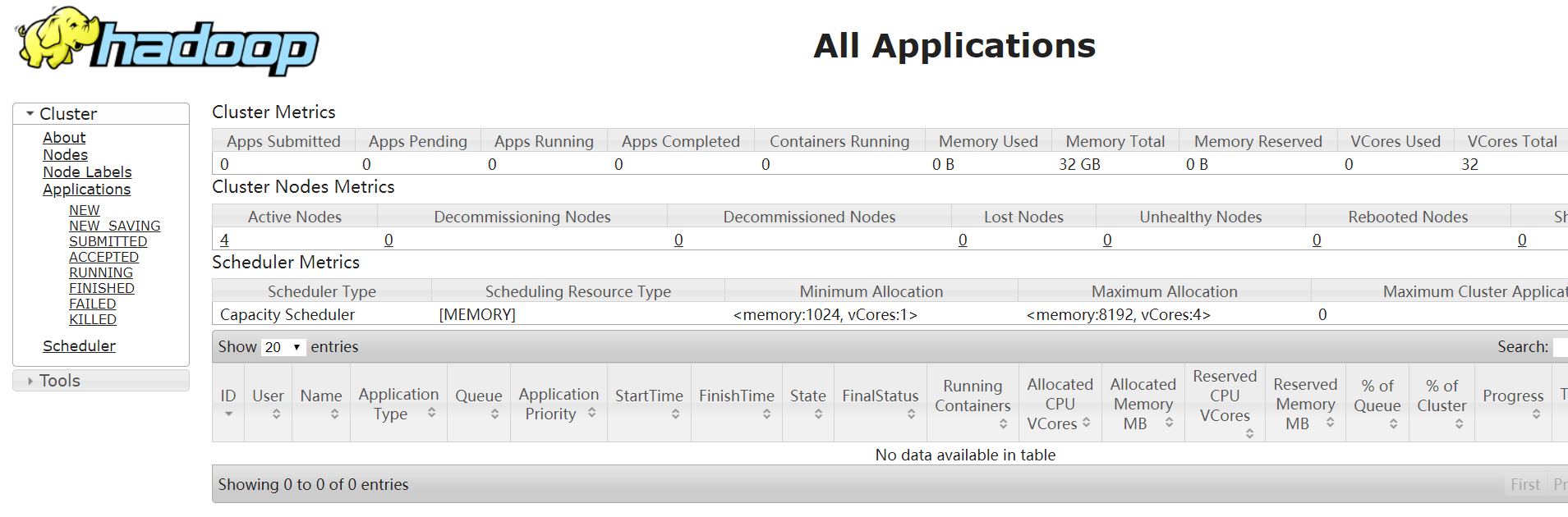

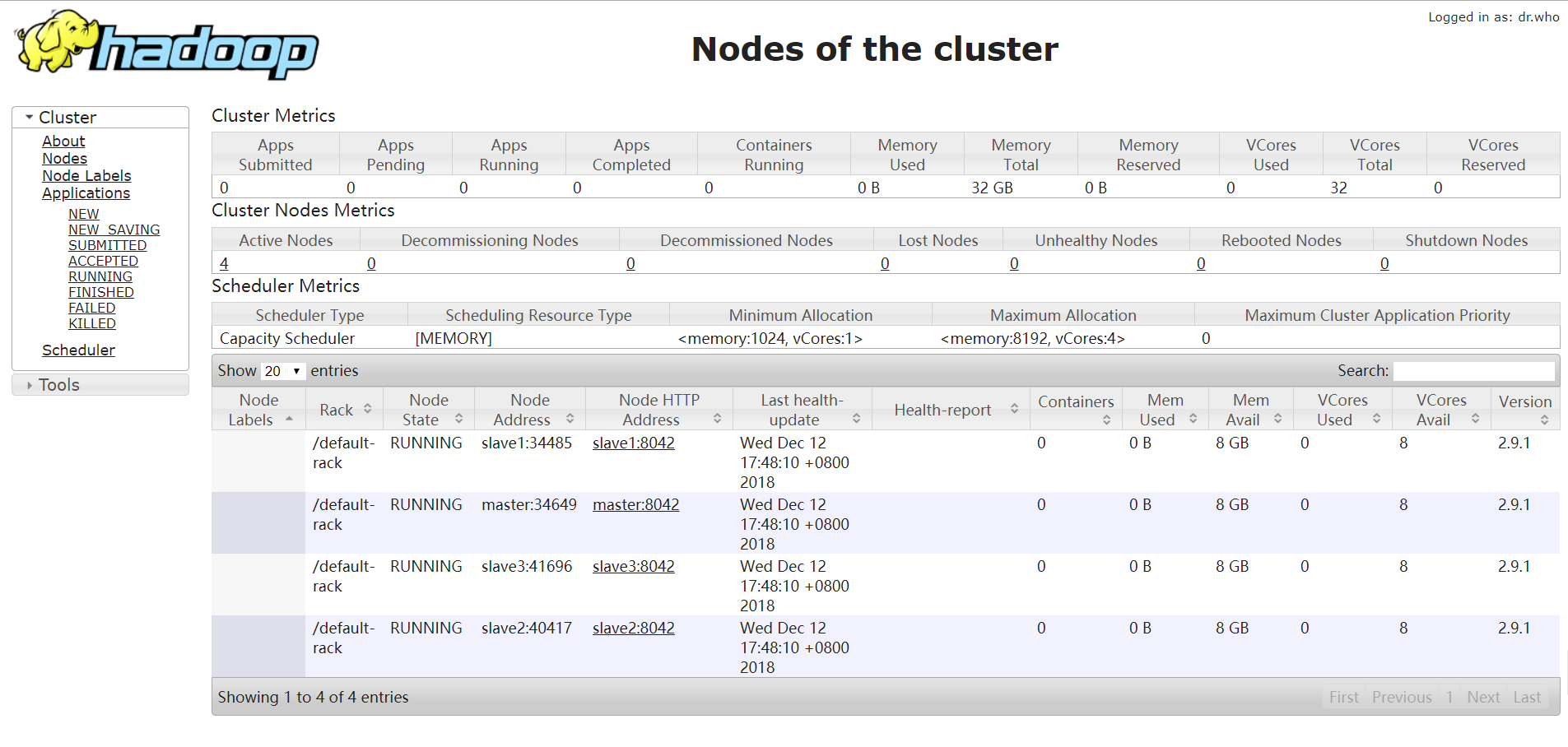

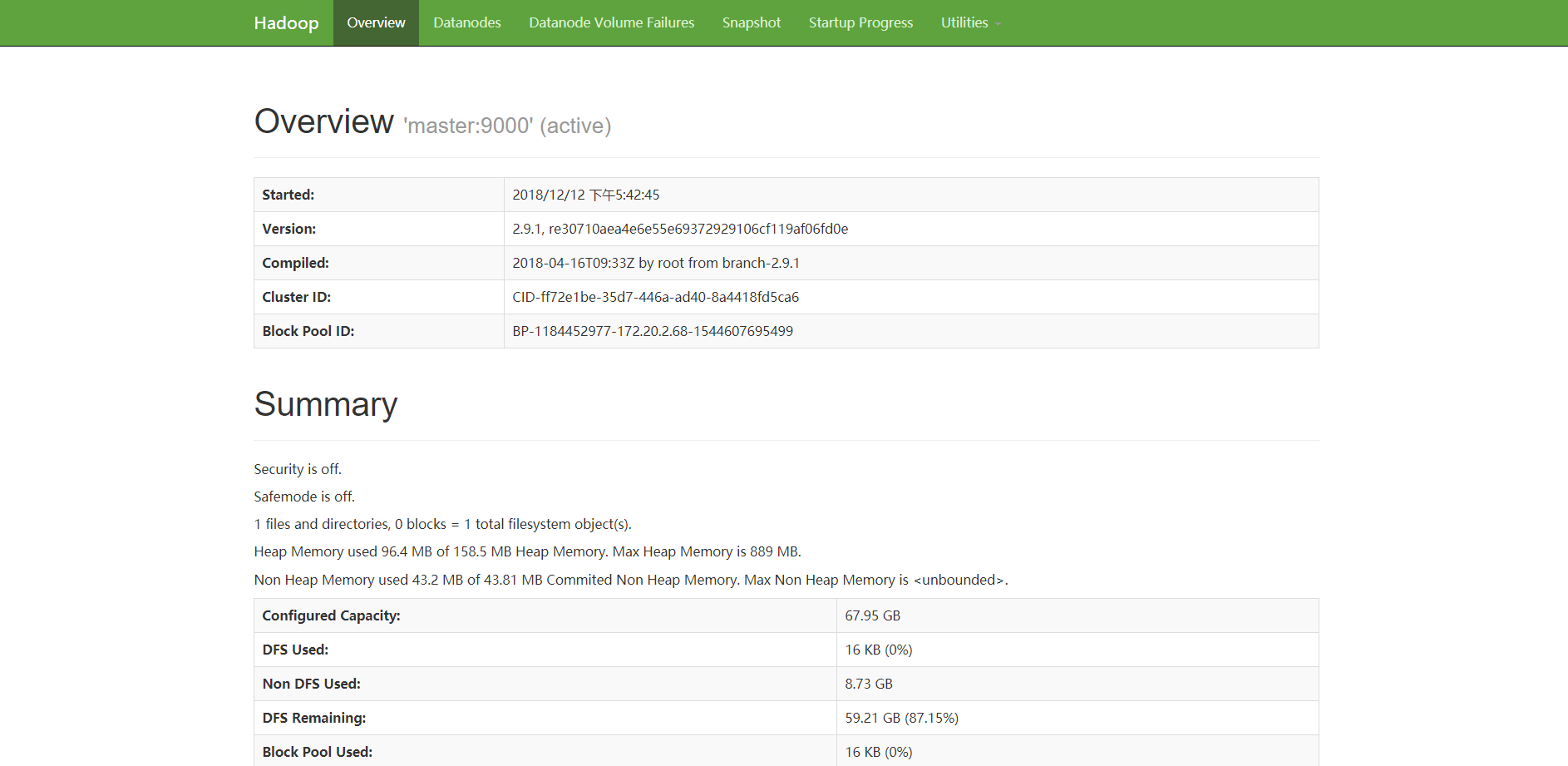

七 查看HDFS和YARN的Web管理界面

7.1 查看HDFS的Web管理界面

7.2 查看YARN的Web管理界面

访问:http://172.20.2.110:8088/cluster